Vertica Integration with Talend: Tips and Techniques

About Vertica Tips and Techniques

Vertica develops Tips and Techniques documents to provide you with the information you need to use Vertica with third-party products. This document provides guidance using one specific version of Vertica and one version of the vendor's software. While other combinations are likely to work, the specific versions you are using may not have been tested.

Overview

This document provides guidance for configuring Talend Open Studio to connect to Vertica. This document covers integration with Talend Data Integration; it does not specifically cover any other products in the Talend Unified Platform. However, connectivity options for other Talend products should be similar to the options covered here.

Vertica and Talend Open Studio for Data Integration: Latest Versions Tested

| Software | Version |

|---|---|

| Partner Product |

Talend Open Studio for Data Integration 8.0.1 |

| Partner Product Platform | Windows Server 2019 Standard |

| Vertica Client |

Vertica JDBC Driver 9.3.1 (bundled with the product) |

| Vertica Server | Vertica Analytic Database 23.4.0 |

Connecting Talend and Vertica

When you create a connection, Talend automatically downloads the version of the Vertica JDBC driver appropriate for your version of Vertica.

For details on connecting Talend to Vertica, see the Vertica Integration with Talend: Connection Guide.

Installing Vertica Service Packs

Always install the latest service pack when upgrading your driver. Service packs contain important fixes and updates. (The third digit in the release number represents the service pack.) Check the vertica.com downloads page to see release notes for the latest service pack.

Configuring Talend for Use with Vertica

To configure Talend for use with Vertica, you must understand:

Using Talend Components for Vertica

Talend provides several Vertica-specific components that you can use to manage your ETL jobs. Understanding the ETL flow and loading guidelines can help you determine which components to use, and how best to use them.

Understanding ETL Flow and Loading Guidelines

Understanding the basic ETL flows helps you decide which Vertica component and options to use. This section addresses the following load types:

| Type of Load | Use this COPY Option | Results |

|---|---|---|

|

Small Bulk Load COPY (<100MB) |

AUTO |

|

|

Large Bulk Load COPY |

DIRECT |

|

|

Incremental Load COPY |

TRICKLE |

|

Follow these guidelines when loading data into Vertica:

-

Use COPY to load large amounts of data. Using COPY avoids fragmenting the WOS and doesn't require the overhead that using INSERT does.

- If your row count is small (fewer than 1000 rows), use INSERT.

- Load multiple streams on different nodes.

If you are using the INSERT INTO...SELECT...FROM syntax in ETL jobs with large volumes, be sure to use the following syntax.

=> INSERT /+*direct*/ INTO table SELECT…

Commonly Used Vertica Specific Talend Components

The following Talend 8.0.1 components are specifically for use with Vertica:

| Component | Description |

|---|---|

|

tVerticaInput |

Extracts data from Vertica. |

|

tVerticaBulkExec |

Loads from a file. |

|

tVerticaOutputBulk |

Writes to a file. |

|

tVerticaOutputBulkExec |

Writes to a file and loads the data. |

|

tVerticaOutput |

Inserts or updates rows into a Vertica table. |

|

tVerticaRow |

Executes the SQL query stated against the Vertica database. |

tVerticaInput

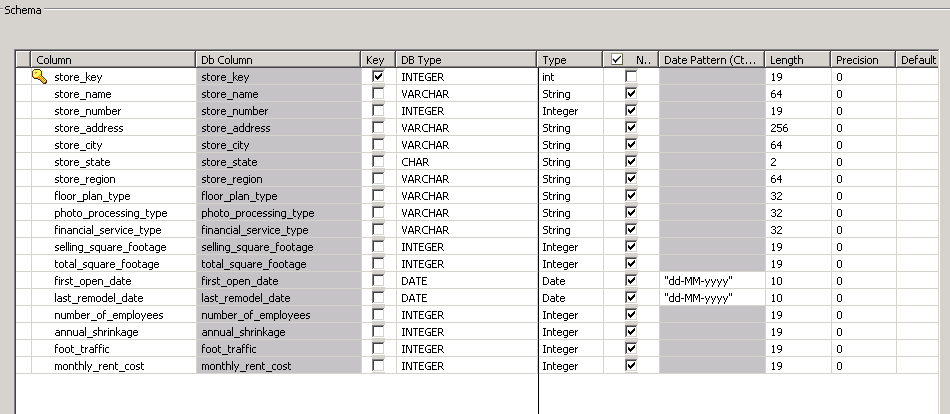

The tVerticaInput component allows you extract data from Vertica. Give special attention to the DATE and VARCHAR fields, as follows:

- DATE fields—All DATE fields must be in MM-DD-YYYY format. Check your DATE fields and correct the format wherever necessary, as shown in the following dialog box.

- VARCHAR fields—Talend examines a sampling of the data when determining the size of the VARCHAR field. If the table contains large VARCHAR values, consider increasing the default column size.

Note You can also use the tMap component to transform or remap the data type.

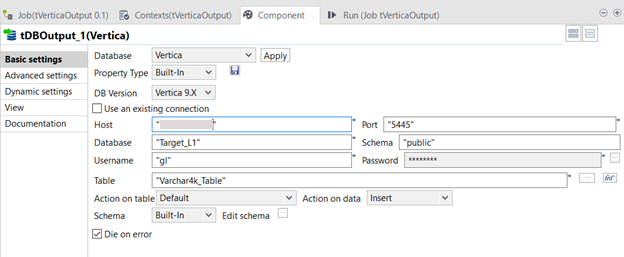

tVerticaOutput

tVerticaOutput provides options that you can change, depending on the size and type of load. Specifically, you should change the Action on Data and Action on Table options, as described in the following sections.

Basic Settings for Trickle or Incremental Loads (Writing to WOS)

Set the Action on Table and Action on Data options according to your use case. For trickle or incremental loads, set Action on Data to INSERT.

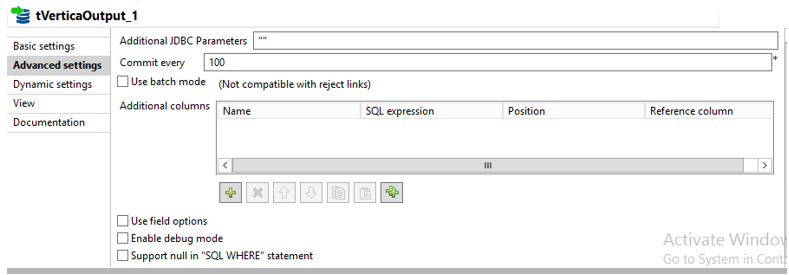

Advanced Settings for Trickle or Incremental Loads (Writing to WOS)

When writing to the WOS, use the default values for Advanced Settings as follows.

To enable fields for INSERT, UPDATE, and DELETE, select the Use field options checkbox. The default settings in the previous graphic generate the following SQL statements:

2023-10-23 11:18:45.146 Init Session:0x7f23f85ee700-a0000000018590 [Txn] <INFO> Starting Commit: Txn: a0000000018590 'INSERT INTO talend_tgt.VarChar4k_Table (DataTypeSet,ValueDesc,VarChar4k_Column) VALUES (?,?,?)' 1721

Note These default settings do not generate a COPY statement.

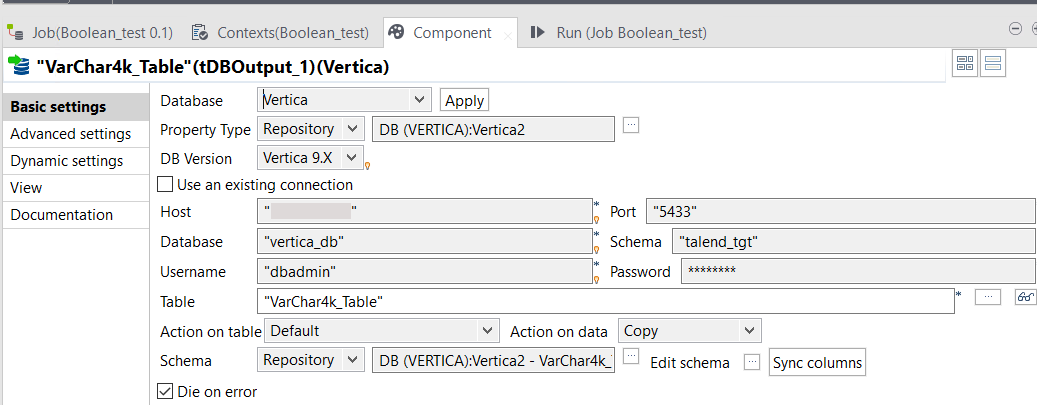

Basic Settings for Large, Bulk Loads (Writing to ROS)

For large, bulk loads, use the DIRECT syntax to write to ROS. To do so, you must set the Action on data option to COPY.

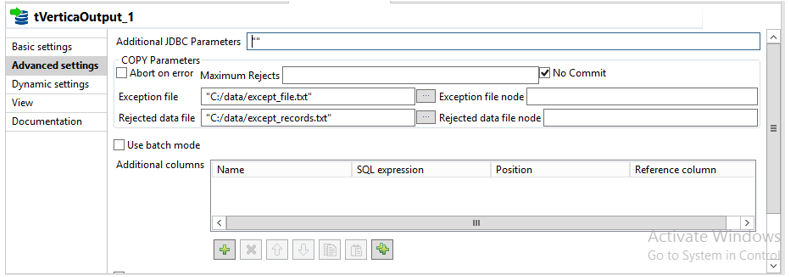

Advanced Settings for Large, Bulk Loads (Writing to ROS)

When using COPY, use Advanced Settings to specify filenames and locations for exception files.

The settings in the preceding graphic generate the following SQL statements:

2023-10-25 03:35:39.018 Init Session:0x7f244e1ed700-a00000000196e5 [Txn] <INFO> Starting Commit: Txn: a00000000196e5 'COPY talend_tgt.VarChar4k_Table (DataTypeSet,ValueDesc,VarChar4k_Column) FROM local STDIN UNCOMPRESSED WITH DELIMITER ';' RECORD TERMINATOR ' ' ENCLOSED BY '|' NULL AS '' EXCEPTIONS 'C:/data/except_file.txt' REJECTED DATA 'C:/data/target_file.txt' DIRECT NO COMMIT'

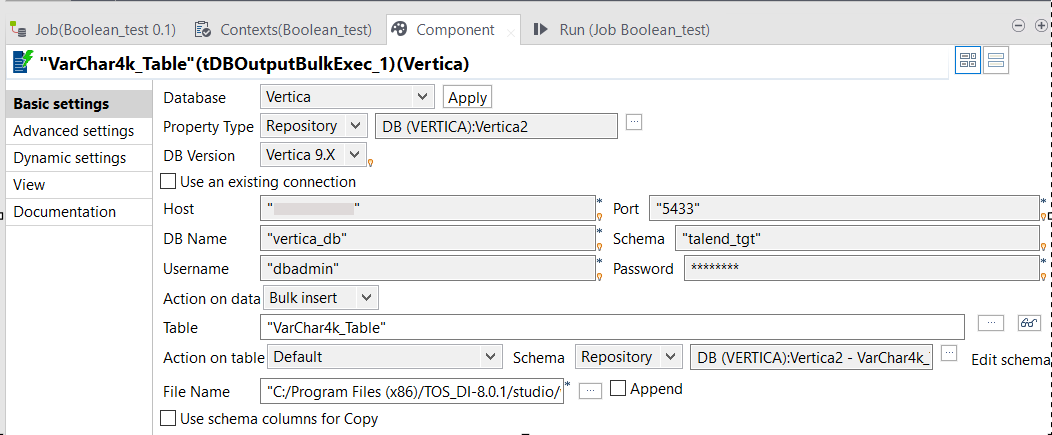

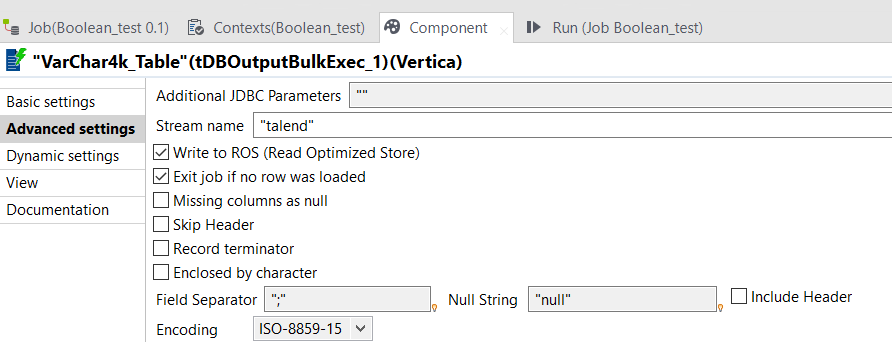

tVerticaOutputBulkExec

The tVerticaOutputBulkExec component writes to a file and then copies the data using standard input (STDIN).

Basic Settings

Advanced Settings

By default, tVerticaOutputBulkExec writes to the ROS.

The settings in the preceding graphic result in the following SQL statements:

2023-10-25 04:31:58.122 Init Session:0x7f244e9ee700-a0000000019747 [Txn] <INFO> Starting Commit: Txn: a0000000019747 'COPY talend_tgt.VarChar4k_Table FROM local STDIN UNCOMPRESSED STREAM NAME 'talend' DELIMITER ';' ESCAPE AS '\' NULL 'null' DIRECT returnrejected'

tVerticaRow

The tVerticaRow component allows you to specify any valid Vertica SQL statements, including COPY statements. Use tVerticaRow to load data into Vertica flex tables, or for other scenarios that require Vertica structures that are not supported with custom Talend components.

Example: High-Performance COPY

In the following example, the source file is on the Vertica server and uses the high-performance Vertica COPY (not COPY FROM LOCAL). Whenever the source files are on the Vertica cluster, load data using high-performance COPY.

"COPY talend.store_target FROM '/home/dbadmin/store.csv' DELIMITER ';' NULL '' DIRECT;"

Example: Loading into Flex Tables

This example shows how to run the Vertica flex example included in the Vertica package directory.

To create the flex table:

=> CREATE FLEX TABLE mountains();

To load data to the flex table:

=> COPY mountains FROM '/opt/vertica/packages/flextable/examples/mountains.json' PARSER FJSONPARSER();

To create the view of the flex table:

=> SELECT COMPUTE_FLEXTABLE_KEYS_AND_BUILD_VIEW('mountains');

Example: Performing Vertica-to-Vertica COPY

This example shows a Vertica-to-Vertica COPY:

“CONNECT TO VERTICA vmart USER dbadmin PASSWORD '' ON 'VertTest01',5433;COPY customer_dimension FROM VERTICA vmart.customer_dimension DIRECT;”

You can also use tVerticaRow to copy from Vertica to Vertica when no transformations are required. To do so, you need to perform additional steps to define the export addresses.

Connecting to a public network requires some configuration. For information about using this statement to copy data across a public network, see Using Public and Private IP Networks in the Vertica documentation.

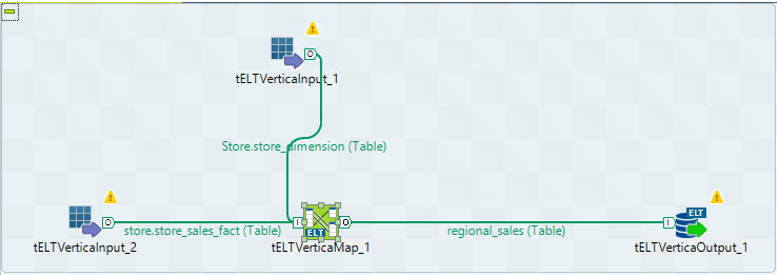

Using Generic Talend ELT Components with Vertica

The ELT family of components group together database connectors and processing components for ELT mode, where the target DBMS becomes the transformation engine. When possible, SQL statements are combined and processed in a single query on the database. The following illustration shows how Talend supports ELT with Vertica. This example uses the generic components for the entire job.

When this job runs, Talend generates the following SQL statements:

=> INSERT INTO store.regional_sales (store_name,store_city,store_region,sales_quantity, sales_dollar_amount)

(SELECT Store.store_dimension.store_name , Store.store_dimension.store_city , Store.store_dimension.store_region , sum(store.store_sales_fact.sales_quantity), sum(store.store_sales_fact.sales_dollar_amount) FROM Store.store_dimension INNER JOIN store.store_sales_fact ON

( store.store_sales_fact.store_key = Store.store_dimension.store_key )

group by Store.store_dimension.store_name, Store.store_dimension.store_city,Store.store_dimension.store_region )

Other Components for Bulk Loading

The tVerticaOutputBulk and tVerticaBulkExec components are generally used together in a two-step process. First, an output file is generated. In the second step, the output file is used in the INSERT operation that loads data into a database.

You can also choose to do both steps at once using the tVerticaOutputBulkExec component. However, using tVerticaOutputBulk and tVerticaBulkExec allows the data to be transformed before it is loaded in the database.

Using the Talend SQL Builder

When using the SQL builder, be sure to include the schema name in the query:

=> SELECT * FROM store.store_dimension;

Enabling Parallelization in Talend

You can enable parallelized data flows. To do so, partition an input data flow of a Talend subjob into parallel processes and execute these processes simultaneously.

Enabling Parallelization in the Talend Enterprise Edition

If you are using the Talend Enterprise Edition, you can enable or disable the parallelization with a single click. Talend Studio then automates the implementation across a given job. For more information about this feature, click the following link:

Enabling Parallelization in the Talend Community Edition

If you are using the Talend Community Edition, add a WHERE clause to the original query to chunk the data. This example results in four chunks.

original_sql + " and hash(" + primaryKey + ") % " + noOfThreads + " = " + i

Example:

=> SELECT if.* FROM inventory_fact if, warehouse_dimension wd WHERE if.warehouse_key=wd.warehouse_key

The preceding query chunks the SELECT operation into the following four queries:

=> SELECT if.* FROM inventory_fact if, warehouse_dimension wd WHERE if.warehouse_key=wd.warehouse_key AND HASH(product_key, date_key) % 4=1; => SELECT if.* FROM inventory_fact if, warehouse_dimension wd WHERE if.warehouse_key=wd.warehouse_key AND HASH(product_key, date_key) % 4=2; => SELECT if.* FROM inventory_fact if, warehouse_dimension wd WHERE if.warehouse_key=wd.warehouse_key AND HASH(product_key, date_key) % 4=3; => SELECT if.* FROM inventory_fact if, warehouse_dimension wd WHERE if.warehouse_key=wd.warehouse_key AND HASH(product_key, date_key) % 4=4;

Choose keys that have equal distribution. For example, the two keys chosen in the preceding example provide the following counts:

Key : 235164 Value : product_key , Key : 50148 Value : date_key count, chunk 7501441, 1 7500008, 2 7497028, 0 7501523, 3

Known Issues

This section describes known issues in Vertica/Talend connections.

Case for Schema Names

Schema names in Vertica are case-insensitive. However, in Talend, you must provide the exact case of schema name.

Schema Name not Provided with Generic JDBC Connection

The Generic JDBC Connection does not append the schema name with the table name; therefore, if you use this connection, jobs will fail in Talend.

Vertica Data Type Mapping and Min-Max Values

Testing has discovered several data-type transfer issues. In the following situations, all the rows are transferred, but the data precision is lost for extremely large values. The loss of precision occurs for INTEGER, DOUBLE, AND DECIMAL data types. For more information, see the Talend known issue at https://jira.talendforge.org/browse/TDI-29446.

To verify that the values described in this issue transfer correctly, map FLOAT to DOUBLE.

Loss of precision also occurs with INTEGER data types. As you can see, the default mapping for our INTEGER data types is INTEGER. If the type is changed to LONG, the data type is changed to BIGINT and the transfers are accurate.

Editing Data Type Mappings

To edit the mappings in Talend Open Studio, navigate to Window > Preferences > Talend > Specific Settings.

You can find the default mappings file (mapping_vertica.xml) for Vertica in the following folders:

Linux:

<Talend_Install_Dir>/ configuration\org.eclipse.osgi\bundles\1707\1\.cp\mappings

Windows:

C:\unzipped\TOS_DI-20170623_1246-V6.4.1\configuration\org.eclipse.osgi\501\0\.cp\mappings

Before you make changes to the mapping_vertica.xml file, contact Talend support for guidance. For more information, see Changing the default data type mapping.