Understanding Vertica Import and Export

Vertica provides import and export capabilities that allow you to move data between two Vertica clusters. Importing and exporting data between clusters is faster than streaming data over STDIN or using a vsql connection for the reasons described in this document.

Import and Export: An Overview

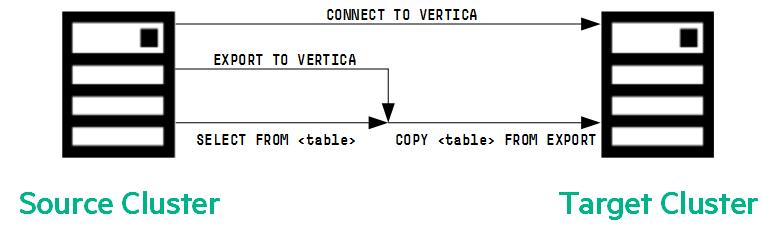

The import and export processes operate as mirror images. A cluster that exports the data executes the equivalent of a SELECT statement. A cluster that imports the data executes the equivalent of a COPY statement. The mechanics of an import/export operation resemble an INSERT... SELECT ... query.

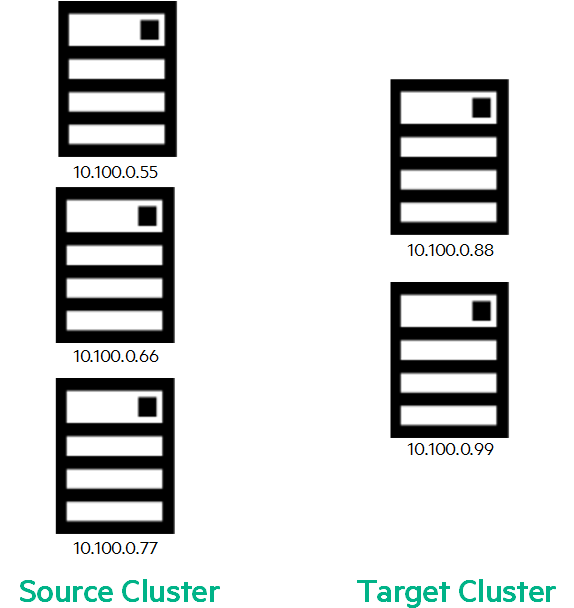

This document refers to the cluster exporting the data as the source cluster, and the cluster importing the data as the target cluster. Data moves from a table in the source cluster to a similar table in the target cluster. These clusters and tables may have different topologies and other characteristics such as:

- Numbers of nodes

- Database versions

- Numbers of projections

- Column data types

- Segmentation

- Users

- Configuration settings

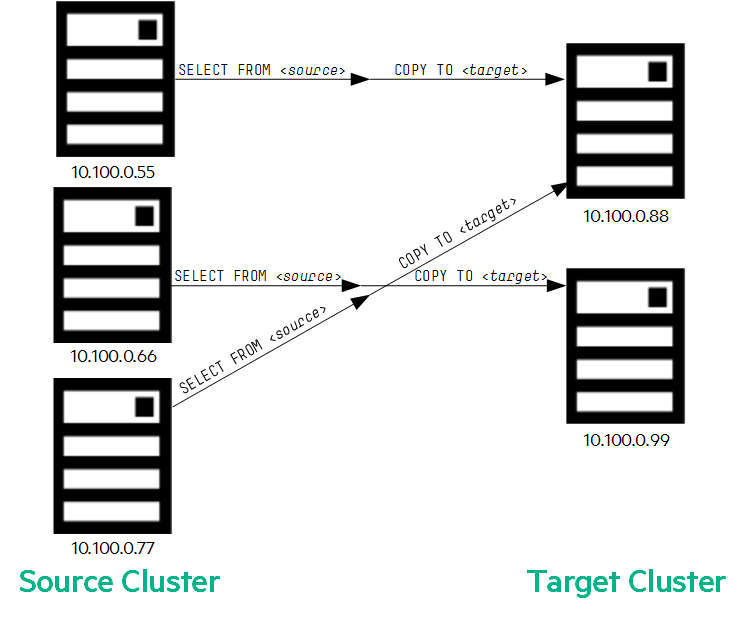

To illustrate how import and export work, the examples in this document use two Vertica clusters:

- Source cluster: 3 nodes (10.100.0.55, 10.100.0.66, 10.100.0.77)

- Target cluster: 2 nodes (10.100.0.88, 10.100.0.99)

How Does Export Work?

On the source cluster, an export operation typically executes as the following commands. First, the source database connects to the target database. Then the source executes the EXPORT TO VERTICA query, which specifies the SELECT statement that retrieves the data to export to the target:

source=> CONNECT TO VERTICA VerticaDBTarget USER dbadmin PASSWORD '' ON '10.100.0.88',5433; source=> EXPORT TO VERTICA VerticaDBTarget.tgt_table (n,a,b) AS SELECT n AS col1, a as col2 , b as col3 from src_table;

The source executes the SELECT statement in the EXPORT statement above to retrieve the data to be exported. The target creates a COPY statement to connect to the source and receives the output of the SELECT statement.

This COPY statement contains the information needed for the target cluster to connect to the source cluster and stream the data. This information includes IP addresses, port numbers, data types and column names, and encoding and compression information.

The COPY statement copies the output from the SELECT statement executes on the source and stores that data on the target.

The following graphic illustrates the EXPORT process:

To see the exact COPY statement that executes on the target database, query the SESSIONS system table. Because multiple concurrent TCP streams are loading data, this COPY statement tends to execute faster than using vsql or copying data from STDIN.

target=> SELECT user_name, node_name, current_statement FROM sessions; user_name | node_name | -----------+----------------------------+---------------------------------------------------- dbadmin | v_VerticaDBTarget_node0001 | COPY tgt FROM EXPORT ':SendExport explainBits:0 ... (1 rows)

Initial connections are made to source node IPs:5434 and then switched to ephemeral ports on the source side.

In the following example:

- v_VerticaDBTarget_node0001 is receiving data from both 10.100.0.55 (source node 1) and 10.100.0.77 (source node 3)

- v_VerticaDBTarget_node0002 is receiving data from 10.100.0.66 (source node 2)

v_VerticaDBTarget_node0001 is doing twice as much work than v_VerticaDBTarget_node0002. The import/export operation can take longer when the source and target nodes are not evenly matched.

COPY tgt ( n, a, b )

FROM EXPORT

':SendExport explainBits:0 planNumber:45035996273709640 tag:1000 status:

:DataPort ip_source:2 oid:45035996273704982

name:v_VerticaDBTarget_node0001 ip:10.100.0.55 address_family:0 port:5434 .

ports: { :DataPort ip_source:2 oid:45035996273704982

name:v_VerticaDBTarget_node0001 ip:10.100.0.55 address_family:0 port:5434 .

:DataPort ip_source:2 oid:45035996273721216

name:v_VerticaDBTarget_node0002 ip:10.100.0.66 address_family:0 port:5434 .

:DataPort ip_source:2 oid:45035996273721220

name:v_VerticaDBTarget_node0003 ip:10.100.0.77 address_family:0 port:5434 . }

db:VerticaDBSource

table:tgt colnames: { :string _:n . :string _:a . :string _:b . }

columns: { :DataType oid:9 type:6 len:3 typmod:7 .

:DataType oid:9 type:6 len:4997 typmod:5001 .

:DataType oid:9 type:6 len:4997 typmod:5001 . }

rle: { :vbool _:0 . :vbool _:0 . :vbool _:0 . }

isCompressed:0 . '

The netstat command shows that the receiving queues on the target systems 10.100.0.88 and 10.100.0.99 are actively receiving data. 10.100.0.88 is receiving data from 10.100.0.55 and 10.100.0.77, and 10.100.0.99 is receiving data from 10.100.0.66.

[ dbadmin@ip-10-100-0-88 ~]$ netstat -tna -p $(pgrep vertica) | grep ESTABLISHED | grep ":5434" Proto Recv-Q Send-Q Local Address Foreign Address State PID/Program name tcp 476767 0 10.100.0.88:5434 10.100.0.55:46756 ESTABLISHED 6834/vertica tcp 457636 0 10.100.0.88:5434 10.100.0.77:59568 ESTABLISHED 6834/vertica

[ dbadmin@ip-10-100-0-99 ~]$ netstat -tna -p $(pgrep vertica) | grep ESTABLISHED | grep ":5434" tcp 480539 0 10.100.0.99:5434 10.100.0.66:59074 ESTABLISHED 6359/vertica

Your source and target clusters should have the same number of nodes. Doing so helps balance the load, ensuring that no single node is can hold up the export operation. Having the same number of nodes can also improve data transfer speeds.

When there are different numbers of nodes in the source and target cluster, the multiple sources may be sending data to one target, or one source may be sending data to multiple targets.

How Does Import Work?

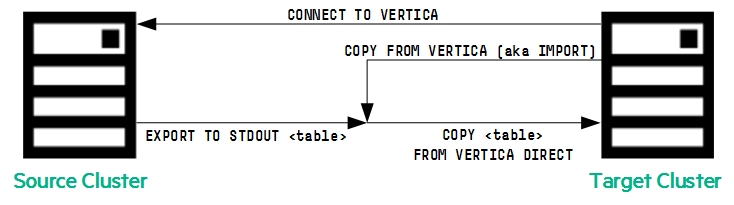

On the target cluster, an import operation typically executes as the following commands.

target=> CONNECT TO VERTICA VerticaDBSource USER dbadmin PASSWORD '' ON '10.100.0.55',5433; CONNECT target=> COPY tgt(n,a,b) FROM VERTICA VerticaDBSource.src(n,a,b) DIRECT;

The CONNECT command results in a connection from the target cluster to the source cluster. The COPY command specifies the data to be imported.

The following graphic illustrates this situation:

To see the EXPORT statement that executes on the source database, query the SESSIONS system table:

source=> SELECT node_name, current_statement, last_statement FROM sessions;

node_name | current_statement | last_statement

----------------------+-----------------------------------------+----------------

v_verticadb_node0001 | export to STDOUT FROM src ( n , a , b )

Network Data Compression

Usually, the network is not the bottleneck in an import or export operation. However, on some slow- or low-bandwidth networks, you can speed data transfer between source and target cluster by enabling network data compression on both the source and the target cluster.

If the network settings are not the same, you see the following error:

target=> COPY tgt(n,a,b) FROM VERTICA verticadb.src(n,a,b) DIRECT; ERROR 5520: verticadb compresses network traffic. verticadb2 does NOT compress network traffic. Please change the configuration to be consistent HINT: Configuration can be changed using set_config_parameter() function

Enable network data compression on both the source and target nodes. To do so, set the CompressNetworkData configuration parameter to 1:

=> SELECT SET_CONFIG_PARAMETER('CompressNetworkData',1);

SET_CONFIG_PARAMETER

----------------------------

Parameter set successfully

(1 row)

Tracking the Progress of Import and Export Operations

You can monitor the progress of an import/export operation using the LOAD_STREAMS system table on the target. The parse_complete_percent field is empty, which means that the data does not need to be parsed on the target cluster.

target=> SELECT read_bytes,parse_complete_percent,unsorted_row_count,sorted_row_count

FROM load_streams WHERE is_executing;

read_bytes | parse_complete_percent | unsorted_row_count | sorted_row_count

------------+------------------------+--------------------+------------------

0 | | 39645696 | 19559826

(1 row)

Unlike loading a CSV file using a COPY statement, which requires the file to be parsed, the IMPORT operation implies a COPY where Vertica does not need to parse at the target cluster. The source streams the data to the target as a tuple.

Specifically monitor progress by looking at the rows received counter on the target:

target=> SELECT node_name, counter_name, counter_value, operator_name

from execution_engine_profiles WHERE is_executing='t'

AND counter_name IN ('rows received') AND Operator_name IN ('Import');

node_name | counter_name | counter_value | operator_name

----------------------------+---------------+---------------+---------------

v_VerticaDBTarget_node0001 | rows received | 124257398 | Import

v_VerticaDBTarget_node0001 | rows received | 123197558 | Import

v_VerticaDBTarget_node0002 | rows received | 237063416 | Import

(3 rows)

To monitor the process on the source, look at the rows sent counter:

source=> SELECT node_name, counter_name, counter_value,operator_name

FROM execution_engine_profiles WHERE is_executing='t' a

AND counter_name in ('rows sent') AND Operator_name IN ('Export');

node_name | counter_name | counter_value | operator_name

----------------------------+--------------+---------------+---------------

v_VerticaDBSource_node0001 | rows sent | 78890185 | Export

v_VerticaDBSource_node0002 | rows sent | 126071726 | Export

v_VerticaDBSource_node0003 | rows sent | 78889767 | Export

(3 rows)

Incremental Export

When you are continually adding data to a table in the source cluster and want to copy the data to the target table in the target cluster, you can perform incremental exports. With an incremental export, you push only recently added data to the target using a query with epoch predicates, or other predicates such as date predicates.

In the following example, 39 is the epoch during which the last successful export took place. The EXPORT statement in the following example exports only the data that was loaded onto the source after epoch 39. The import operation does not support a similar syntax.

source=> COPY cluster1_table1 FROM STDIN DIRECT;

Enter data to be copied followed by a newline.

End with a backslash and a period on a line by itself.

>> 100

>> 101

>> 102

>> 103

>> \.

source=> SELECT epoch, * FROM cluster1_table1;

epoch | i

-------+-----

39 | 2

39 | 3

39 | 5

40 | 101

40 | 102

39 | 1

39 | 4

40 | 100

40 | 103

(9 rows)

source=> CONNECT TO VERTICA VerticaDBTarget USER dbadmin PASSWORD '' ON '10.100.0.88',5433;

CONNECT

source=> EXPORT TO VERTICA VerticaDBTarget.cluster2_table2 AS SELECT * FROM public.cluster1_table1

WHERE epoch > 39;

Rows Exported

---------------

4

(1 row)

Parallel Export

If you have a large amount of data to export, you can speed up the export operations by running multiple exports in parallel:

=> EXPORT TO VERTICA VerticaDBTarget.tgt (n,a,b) AS SELECT n as col1, a as col2 , b as col3 FROM src WHERE epoch > 0 AND epoch <= 1; => EXPORT TO VERTICA VerticaDBTarget.tgt (n,a,b) AS SELECT n as col1, a as col2 , b as col3 FROM src WHERE epoch > 1 AND epoch <= 2; => EXPORT TO VERTICA VerticaDBTarget.tgt (n,a,b) AS SELECT n as col1, a as col2 , b as col3 FROM src WHERE epoch > 2 AND epoch <= 3; => EXPORT TO VERTICA VerticaDBTarget.tgt (n,a,b) AS SELECT n as col1, a as col2 , b as col3 FROM src WHERE epoch > 3 AND epoch <= 4;

Choose the epoch ranges (or other predicates, such as date predicates) so that the number of rows are approximately equal in data size.

Configuring Network Connections for Import and Export

Vertica imports and exports data from one Vertica cluster to another across a private network using the EXPORT TO VERTICA statement and COPY FROM VERTICA statement. By default, the cluster uses the private network for importing and exporting data.

To use the public network, you must configure the system by changing the export address. You can have only one network configuration for each server. You configure the system to use the public network by:

- Identifying the IP addresses of the nodes or clusters on the public network.

- Configuring the database or individual nodes for import/export.

For detailed information on the configuration steps, see Configuring Network to Import and Export Data.

Sequences, Identity, and Column Default Values

The export operation is similar to inserting data. However, when you export data directly from the source, Vertica does not generate the sequence and column default values on the target.

Suppose you have the following sequence in a table named test_seq:

source=> CREATE SEQUENCE seqinc START 101 MAXVALUE 1000 CACHE 7 CYCLE;

source=> CREATE TABLE test_seq (col_seq INT DEFAULT NEXTVAL('seqinc'),data VARCHAR(100)) ;

source=> COPY test_seq (data) FROM STDIN;

Enter data to be copied followed by a newline.

End with a backslash and a period on a line by itself.

>> AAAA

>> BBB

>> CCCC

>> AAAAA

>> BBBBB

>> CCCCC

>> DDDDD

>> EEEEE

>> FFFFF

>> \.

source=> SELECT * FROM source;

col_seq | data

---------+-------

101 | AAAA

102 | BBB

103 | CCCC

104 | AAAAA

105 | BBBBB

106 | CCCCC

107 | DDDDD

108 | EEEEE

109 | FFFFF

(9 rows)

To export this data to the target, execute the following commands on the target:

target=> CREATE SEQUENCE seqinc START 10000

MAXVALUE 100000 CACHE 9 CYCLE;

target=> CREATE TABLE tgt(col_seq INT DEFAULT

NEXTVAL('seqinc'), data VARCHAR(100)) ;

To import the data on the source, execute the following commands on the source:

source=> CONNECT TO VERTICA targetdb USER dbadmin

PASSWORD '' ON '10.100.0.77',5433;

source=> EXPORT TO VERTICA targetdb.tgt AS SELECT * from test_seq;

Rows Exported

---------------

9

(1 row)

Because you specified SELECT *, the target gets the sequence values that the source generated:

target=> SELECT * FROM tgt;

col_seq | data

---------+-------

101 | AAAA

102 | BBB

103 | CCCC

104 | AAAAA

105 | BBBBB

106 | CCCCC

107 | DDDDD

108 | EEEEE

109 | FFFFF

(9 rows)

If you want default values (including sequence, identity) to take effect on the target table, structure the export so that you select only the values for the column data. If you omit the sequence column, the target generates the sequence and identity values:

source=> CONNECT TO VERTICA targetdb USER dbadmin

PASSWORD '' ON '10.100.0.77',5433;

source=> EXPORT TO VERTICA test1.tgt(data) AS

SELECT data FROM test_seq;

Rows Exported

---------------

9

(1 row)

You can see that the sequence on the target does not necessarily follow the sequence order of the source:

target=> SELECT * FROM tgt; col_seq | data ---------+------- 10012 | BBBBB 10013 | CCCCC 10015 | EEEEE 10000 | AAAA 10009 | BBB 10010 | CCCC 10011 | AAAAA 10014 | DDDDD 10016 | FFFFF (9 rows)

Using this approach, you can export the value of the sequence and identity from the source to the target. Alternatively, you can have the target generate sequence and identity columns by omitting the column from the EXPORT/IMPORT statement.

Object-Level Backup and Restore

Vertica 7.2.x provides a new object-level backup/restore capability. This feature allows you to restore individual tables or schemas from any backup that contains those objects without restoring the entire backup.

When the number of nodes in the source and target match and the Vertica server versions are the same, an object-level backup/restore is much faster than performing and import/export operation. However, the vbr.py script is not available when the number of source nodes does not equal the number of target nodes.

Use object-level backup and restore when the number of source and target nodes match.

For More Information

For more information about importing and exporting, see the following topics in the Vertica documentation: