How data analytics plus object store handles a classic problem

Today, VAST Data announced a partnership and integration with Vertica. The partnership combines a popular and proven analytical database with a hot new up-and-coming object storage company. This relationship is meaningful for the future of analytics, because the future of analytics is hybrid clouds. Let me explain.

Chasing disparate data

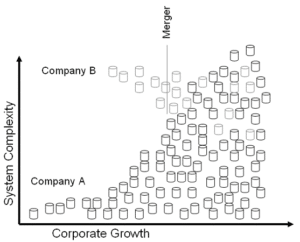

Disparate systems containing disparate data have been a problem for several decades now. Why? Because when companies are busy trying to win revenue, they often don’t think about long-term goals and how sharing data can lead to new opportunities. Instead, new databases, either on cloud or on-premises, can lead to an explosion in the number of sources, each with its own level of performance when it comes to data sharing. The problem gets worse as the company grows and acquires other companies. You end up with a mess of data scattered across a wide range of systems from which it’s difficult to derive value.

Disparate data complicates data management

Today, as in years past, companies don’t want analytical initiatives with tens or hundreds of different platforms for analytics. They instead prefer a unified data platform that supports multiple uses cases for various types of users.

For some, the solution seems to be using a SaaS-based data warehouse. With a cloud-only solution, data can be moved into the cloud and take up residence there in a central location. However, no solution is ever perfect. In some clouds “noisy neighbors” impact the performance of workloads operated by different organizations. Data ownership is an issue in cloud-only solutions, because it must be loaded into the vendors’ cloud. It’s a fact that, for many organizations, some data must be kept on-premises because of regulations or a desire to do so.

Hybrid clouds – offering the capability to run analytical workload in any public cloud or any on-premises environment – provide a solution to these problems. And I think it is the next big thing.

The data lifecycle still applies

Consider the advantages of a hybrid architecture in terms of the data lifecycle. Internal applications may generate data, or data may come into the organization from external sources. With external data, modern architectures require that it lands in object storage data lakes, either in the cloud or on-premises. It then needs to be cleaned up and transformed, then provided to your organization for advanced analytics in a data warehouse-style form-factor. After several months or several years, when the data may no longer be relevant, you can tier it back to a low-cost object store if you choose.

Object Store and Analytics

Object store technology and an analytical database like Vertica work beautifully together. This pairing is often referred to as a hybrid cloud, and it offers several essential features:

- Vertica can access data in the data lake for analytics directly. For example, if you need to perform analytics on a Parquet file in a VAST object store, it’s fast and performant.

- Vertica can use object stores for its main data repository in a data warehouse scenario. This is where we optimize it for more structured data. You can scale up compute separately from your VAST object store for analytical elasticity.

- A Vertica unified system (data lake house) allows you to either access the raw data from your sources, or access your refined data in your warehouse in the same way. Just query it. You can even JOIN between the data lake and data warehouse.

- No matter where it is in the data lake or data warehouse cycle, data can be centralized to one place – VAST object storage. In addition, the management capabilities of on-premises object stores make it easier to back up, recover, assure up-time, and tier essential data.

Why is this important?

Here are a few examples of why you may need a hybrid cloud:

High Availability and Disaster Recovery

Hybrid clouds offer a perfect way to remove reliance on a single vendor and, therefore, a single point of failure. In mission-critical applications, companies can instantiate a cloud-based solution while maintaining an on-premises copy for high availability and disaster recovery.

Develop Once and Serve Data Anywhere

There’s value to your developers if they can write one set of services without worrying about cloud service-provider specifics. As your real-life analytical workloads hit the servers, you may uncover subtle differences between providers. Nuances among different providers in how they handle complicated JOINs, data loading, short/long queries, or machine learning are bound to surface. The “noisy neighbors” problem may be a factor, too, as some cloud providers share your resources with other clients and make your SLAs unpredictable. The freedom to seamlessly move on-premises allows you to go anywhere that price and performance take you.

When Public Cloud Isn’t Possible

Having a single data infrastructure does not always meet each line of business’s distinct regulatory and market requirements. While cloud technologies may be attractive to your company, there are many reasons why organizations need to keep data on-premises. For example, regulations may dictate that you keep personally identifiable information (PII) within the country of origin. Configuring an in-country on-premises cloud may be the right solution. On the other hand, sometimes data is so sensitive that only an on-premises solution will do.