Introduction

When resource pools were first introduced to Vertica, the average computer had a lot less memory than it does today. The default memory size for the Tuple Mover resource pool, 200 MB, reflects the more limited resources of that period. As hardware and software technology has advanced, Vertica users have begun to ask, “I’ve got servers with hundreds of gigabytes of RAM. Can I improve my mergeout performance by allocating more RAM to the Tuple Mover resources pool?” The answer, not surprisingly, is yes.Background

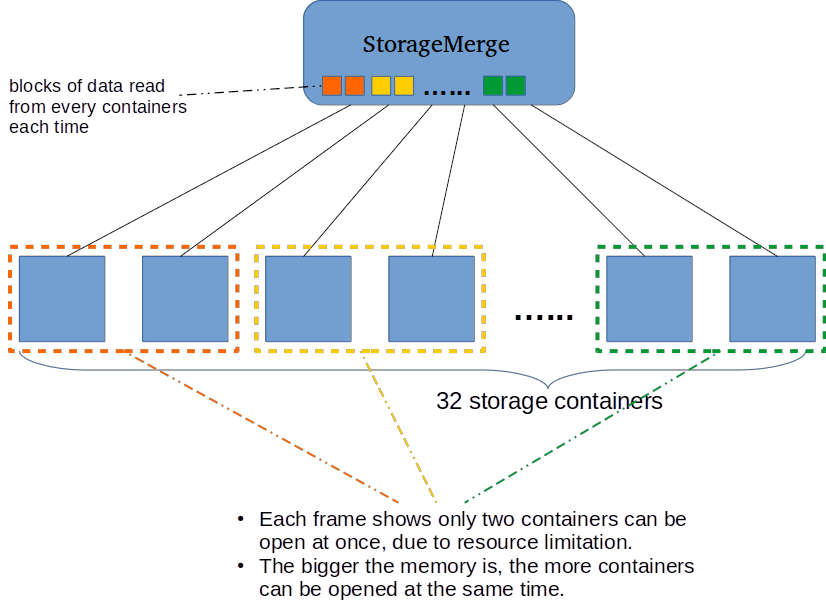

The target amount of memory for a plan is the resource pool’s memory size divided by planned concurrency. Every plan has a lower bound on the amount of memory required to run. Often a plan can run more efficiently given more memory. Mergeout is a relatively simple plan, however, given too little memory a lot of redundant work is done. A Scan operator needs about 1 MB per column to read from a storage container. When the available memory size is small, a mergeout plan opens one or more containers, reads some tuples from each, and closes the containers before moving on to open another set. The Tuple Mover repeats this process until it has read all tuples. The number of tuples the mover reads each time is proportional to the amount of memory available to the plan. Often when a storage container is closed and re-opened the Tuple Mover performs redundant work to decompress the current block.The following image shows the bottom part of a mergeout plan. A StorageMerge operator reads from multiple storage containers and places the tuples it reads into sections of a buffer that it merges. When one section of the buffer is depleted, the corresponding storage container is re-opened and its next batch of tuples are read. The image assumes that the mergeout plan only has sufficient memory to open two storage containers at the same time. When the tm resource pool contains more memory, mergeout can open more containers at one time and buffer more tuples with each read. In this situation, each storage containers is opened much less frequently and performance may improve substantially.

Performance Results

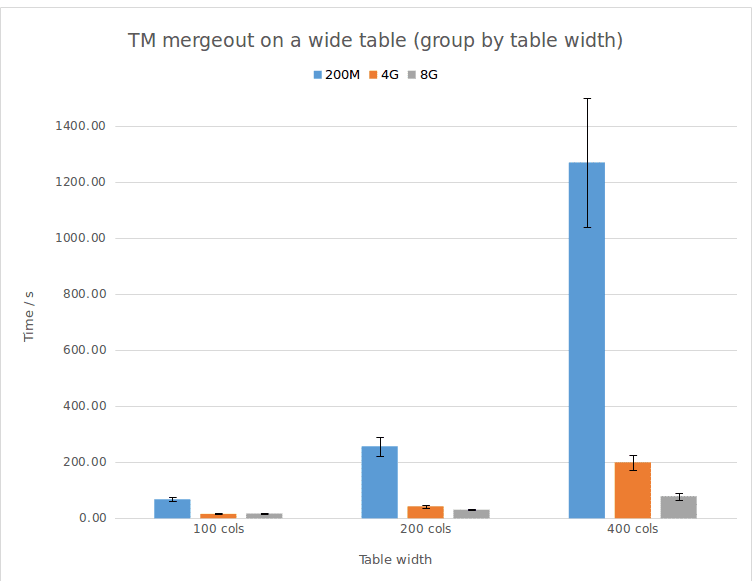

The following experiments demonstrate that, for wider tables, mergeout needs more memory to execute efficiently. As you can see in the chart, the default memory size (200MB) is generally too small, even for a table with just 100 columns. This resource limitation leads to poor performance. The wider the table, the worse the performance. If we increase the memory size until the memory size is big enough for the table, we can get better performance. Roughly 4GB is enough for tables with 100 and 200 columns. There is no significant difference between 4GB and 8GB for these two tables. A 400 column table, however, shows an obvious impact when memory size increases from 4GB to 8 GB.

* Error bars in the chart use the standard deviation of each test (nine times for each set of parameters).

** The output includes error bars (time – memory size).

View Tuple Mover Operations

You can query system tables such as tuple_mover_operations, resource_acquisitions, and resource_pools to compare the memory acquired by a mergeout plan with the configuration of the tm resource pool. The following example query joins these three tables and introduces a way to detect if increasing the memory size of the tm pool is likely to improve mergeout performance. If the memory acquired is greater than memory of the tm resource pool divided by the planned concurrency, the Tuple Mover is memory constrained. For example, the last record in the output table below shows that a mergeout plan on a 100 column table reserved 278MB, which is more than the default memory size (200MB). This difference indicates that that mergeout plan needs more memory than what is provided by the resource pool. This indicator strongly suggests that increasing the memory will improve performance.This query can identify the worst cases, however, it is possible for a mergeout plan to fit within the target memory and still perform poorly. It’s a good idea to experiment with larger memory sizes and to observe the impact of those changes on mergeout performance.

SELECT pool_name,

memorysize,

CONCAT( memory_inuse_kb // 1024, 'M') AS memory_usage_MB,

tuple_mover_operations.transaction_id,

table_schema,

table_name,

projection_name

FROM resource_acquisitions

INNER JOIN resource_pools

ON resource_acquisitions.pool_name = resource_pools.name

INNER JOIN tuple_mover_operations

ON resource_acquisitions.transaction_id = tuple_mover_operations.transaction_id

WHERE (tuple_mover_operations.operation_status = 'Complete'

AND pool_name = 'tm')

ORDER BY memory_usage_MB DESC

LIMIT 1;

pool_name | memorysize | memory_usage_MB | transaction_id | table_schema | table_name | projection_name

-----------+------------+-----------------+-------------------+--------------+------------+-----------------

tm | 8G | 4096M(=4G) | 45035996273706218 | public | t0 | t0_super

tm | 4G | 2048M(=2G) | 45035996273706167 | public | t0 | t0_super

tm | 200M | 278M | 45035996273706092 | public | t0 | t0_super

* memory_usage_MB – This value can be greater than memorysize. If the default resource pool memory size is insufficient for the mergeout operation, Vertica takes extra memory from the general pool. Vertica always remains as close as possible to the default memory size.** The Tuple Mover tries to stay within the limit of memorysize / plannedconcurrency. In this example, plannedconcurrency has the default value of 2.

*** Query results comes from three same queries.

Changing Resource Pool Size

As with any resource pool, you can change the value with an ALTER query. For more information, refer to the Vertica documentation.dbadmin=> SELECT name, memorysize, maxmemorysize FROM V_CATALOG.RESOURCE_POOLS WHERE name = 'tm';

name | memorysize | maxmemorysize

----------+------------+---------------

tm | 200M |

(1 row)

dbadmin=> alter resource pool tm memorysize '8G';

ALTER RESOURCE POOL

dbadmin=> SELECT name, memorysize, maxmemorysize FROM V_CATALOG.RESOURCE_POOLS WHERE name = 'tm';

name | memorysize | maxmemorysize

------+------------+---------------

tm | 8G |

(1 row)